- SAP Community

- Products and Technology

- Technology

- Technology Q&A

- DB2 11.1.1.1 very increased physical reads after u...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

DB2 11.1.1.1 very increased physical reads after upgrade from 10.5.7

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on 07-11-2017 8:30 AM

Hello,

I'm solving problem with db storage overload.

When we upgraded DB2 10.5.7 LUW to DB2 11.1.1.1 LUW db is more or less twice reading from storage and this is overloading this storage. Average of Logical reading is simillar and also Buffer quality is still over 99%.

We also updated SAP kernel from 745pl317 to 745pl417.

My first question is: Do you have simillar experience that DB11.1 is reading more from storage than 10.5?

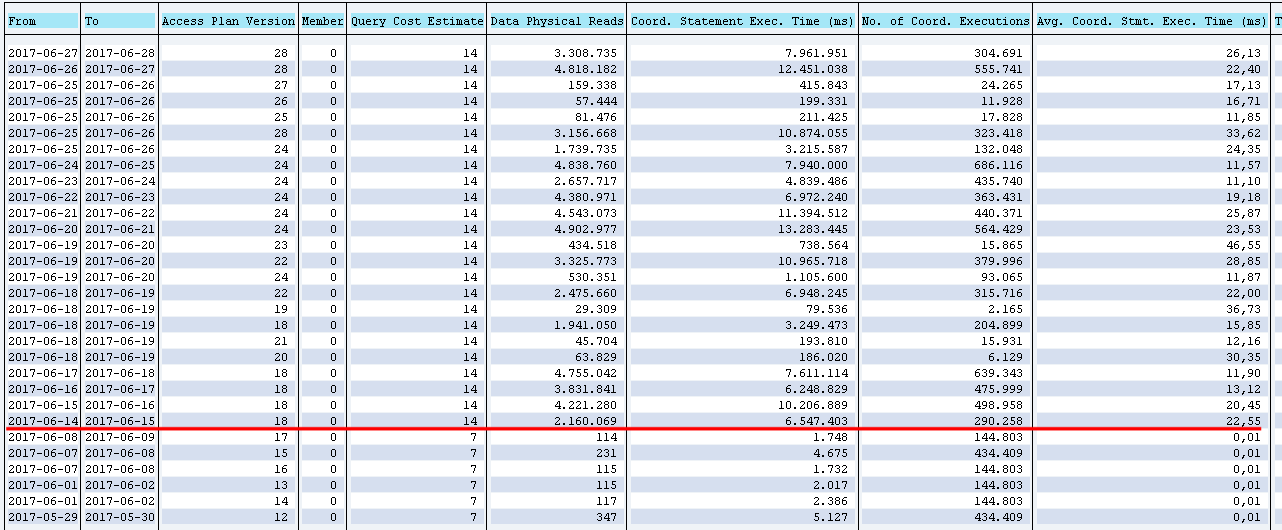

You can se my DB2 staistics in attachment screenshot-2362017-13-51-25.png

Charts of long time statistics for Logical and Physical reads.

Also when I sorted TOP SQL statements by Physical reads, there very increased physical reads for one insert statement "

INSERT INTO "SMENSAPT" VALUES( ?, ?, ? ) -- OPTLEVEL( 5 ) -- QUERY_DEGREE( 1 ) -- LOCATION( SAPLSMNU , 6520 ) -- SYSTEM( ESP , SAPESP )" (of course it is strange, that it is for insert). Before DB2 upgrade this statement read about 100 reads per day after upgrade it reads over 1 000 000 per day. screenshot-2862017-10-16-24.png

TOP SQL statemets for last 30 days screenshot-1172017-9-23-34.png

before upgrade: RHEL6.8, DB2 10.5.7, SAP kernel 745 pl 317

after upgrade: RHEL 6.8, DB2 11.1.1.1, SAP kernel 745 pl 417

update1:

We have also open OSS message in SAP long time, but still without clear answer.

We have affected QAS and PROD ECC system, DEV and SBX probably too but but there is not easz check it for low performance.

We also had turn off FAD

- rsdb/prefer_join_with_fda=0

- rsdb/prefer_join=1

for 10.5.7. how is recomended in note

2338139 - DB6: Performance of FOR ALL ENTRIES with MEMORY_TABLE

and we didn't turn on it imediately after upgrade. We tested turn on it on-line but without any improvemnet related to physical reads. Also SAP as first recomended torn off it, but with restart. So I'm not sure if these parameters are on-line or needed SAP instance restart.

I also changed these parameters during upgrade:

db2set DB2_DATABASE_CF_MEMORY=AUTO

db2 update dbm cfg using FCM_PARALLELISM AUTOMATIC

db2 update db cfg using SOFTMAX 0

B.R.

Martin Mikala

- SAP Managed Tags:

- IBM Db2 for Linux, UNIX, and Windows

Accepted Solutions (0)

Answers (4)

Answers (4)

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hello Martin,

Only my two cents - I would focus also on other changes in system during that time like changes in user roles & user menu & area menu or SAP menu rather than focusing purely on DB2 upgrade. From my view this does not look like DB2 issue.

Regards,

Peter

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hi Peter,

From my point of view is strange that logical data readings are more-less the same but increased only physical data reads. Indexes reading is also the same for logical and physical like before. And buffer hit ratio is still over 99%.

I found one suspicious SQL statement and this one is inserting into some table SMENSAPT.

This statement very increased physical reading after db upgrade/ maintenance day. From hundreds to milions.

But how check what this SQL statement reading from storage.

B.R.

Martin

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hi Martin,

ok. I am not expert in this area. Does FM MENU_GENERATE_SAP_MENU need to be run regularly?

As you can see the number of rows INSERTed is equal to the number of rows DELETEd. So index clean up for this table is important but this still does not explain the huge number of physical DATA reads.

Since the table can grow pretty big I believe using adaptive compression is a good idea.

The huge number of DELETE/INSERTS may also cause some physical data read overhead for free space search within the table. The PCT value calculated by your formula also suggests that there is some free space in your table. To test if free space search is a problem you can temporarily set APPEND ON for this table.

ALTER TABLE SMENSAPT APPEND ON

This can not be done for a long time because with this setting DB2 will not reuse any free space within the table. Still I am not aware of any changes in this area between V10.5 and V11.1. However if elimination free space search helps, this table may be a good candiadate for an insert time clustered table (ITC) table.

If the APPEND ON test helps we can describe how to convert the table to ITC.

In your previous post you mentioned the following parameter changes.

db2set DB2_DATABASE_CF_MEMORY=AUTO

db2 update dbm cfg using FCM_PARALLELISM AUTOMATIC

db2 update db cfg using SOFTMAX 0

Parameter DB2_DATABASE_CF_MEMORY is not relevant here since you are not using PureScale. FCM_PARALLELISM also does not play a role for this problem.

Since you have set SOFTMAX=0, the parameter PAGE_AGE_TRGT_MCR became effective. If you configured PAGE_AGE_TRGT_MCR too low, DB2 may very aggressivly flush changed pages out to disk. What is your current value of PAGE_AGE_TRGT_MCR? Still this does not neccessarily explain why those pages need to be physically read in again when the next INSERT is executed.

Regards

Frank

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hi Frank-Martin,

I'm using some default value of PAGE_AGE_TRGT_MCR.

db2 get db cfg for ESP | grep PAGE_AGE_TRGT_MCR

Target for oldest page in LBP (PAGE_AGE_TRGT_MCR) = 240

And I'm testing use this table with APEND ON in QAS and I probably also testing set SOFTMAX back and also turn on FDA.

thanks for fast answer and ideas

B.R.

Martin Mikala

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hi Frank-Martin

Yes, this insert statement were used also in DB2 10.5.7.

See history of this SQl statement to 10.6 (upgrade date) was this INSERT unexpensive (to access plan version 17) but from this time physical reads very increased for this statement.

This INSERT statement is used by this function module MENU_GENERATE_SAP_MENU.

On table SMENSAPT is set only row compesion. I year ago set adaptive compresion for more or less all tables, but probably not to too small.

db2 "select substr(TABNAME,1,15) as TABNAME, substr((FPAGES-NPAGES+MPAGES)*16384/1024/1024,1,10) as freeMB,substr(DECIMAL(DECIMAL(NPAGES+MPAGES)/DECIMAL(FPAGES),10,2)*100,1,5) as PCT, substr((NPAGES+MPAGES)*16384/1024/1024,1,10) as usedMB, substr(FPAGES*16384/1024/1024,1,10) as totalMB,COMPRESSION as COMP, ROWCOMPMODE as ROWM from syscat.tables where TABNAME like 'SMENSAPT' order by (FPAGES-NPAGES+MPAGES) DESC"

TABNAME FREEMB PCT USEDMB TOTALMB COMP ROWM

--------------- ---------- ----- ---------- ---------- ---- ----

SMENSAPT 1 68.00 4 5 R S

Here is table SMENSAPT statistics for last 7 days.

For automatic maintenance I'm using configuration from db2_update_db.sh script. One of latest version , released when I started DB2 11 upgrade on sandbox.

OSS 281730/2017

B.R.

Martin Mikala

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Report Inappropriate Content

Hi Martin,

this is indeed strange. If I understand your screen shot correctly there is also a huge NUMBER of INSERTs into this table. So this may cause allocation of new extents and therefore physical reads.

I do not see why there should be more physical reads on V11.1 for such INSERT statements than on V10.5?. Are you sure that those INSERTs were present on V10.5 too? Can you identify the application that executes those INSERT statements?

If the target table is not compressed or the compression dictionary is not good this may cause additional extent allocation. Can you check the compression mode of table SMENSAPT? Is it set to adaptive compression?

How does the workload on this table look like? Are only INSERTs happening on this table or DELETEs too? Have you configured automatic reorg on your database, to clean up pseudo deleted keys in the indexes of this table?

If you opened an incident for this problem and have an open service connection, you can send me the incident number.

Regarding FDA:

On DB6 FDA is currently used for ABAP SELECT ... FOR ALL ENTRIES statements only. So this does not affect INSERTs. You can find those FDA statements by filtering for "*FDA WRITE* in the ST04 SQL cache screen. I have seen some of those FDA statements in your screen shot. On V11.1 or V10.5 FP8+ FDA should work fine and we recommend to use it for multiple reasons ( mainly because if reduces network trafic between database server and application server ). With FDA ABAP SELECT ... FOR ALL ENTRIES statements are executed in one SQL statement. Before it was executed in multiple SQL statements that may fetch the same result rows multiple times. So with FDA less SQL statements are executed and network times and DBI processing will be reduced but the single statements may become more expensive. Overall it is usually much faster.

Regards

Frank

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Up Net Working Capital, Up Inventory and Down Efficiency. What to do? in Technology Blogs by SAP

- SAP Analytics Cloud - Performance statistics and zero records in Technology Blogs by SAP

- Part 2:- SAP Basis OS support on SUSE Linux for beginners in Technology Blogs by Members

- What’s New in SAP Analytics Cloud Release 2024.07 in Technology Blogs by SAP

- Workload Analysis for HANA Platform Series - 2. Analyze the CPU, Threads and Numa Utilizations in Technology Blogs by SAP

| User | Count |

|---|---|

| 86 | |

| 10 | |

| 10 | |

| 9 | |

| 6 | |

| 6 | |

| 6 | |

| 5 | |

| 4 | |

| 3 |

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.